Merchant Data Onboarding

Banyan provides the infrastructure for merchants to send receipt level data directly to financial institutions and fintechs without having to enter into contracts directly with each one. Financial institutions can then use the receipt data to better serve the consumer by enhanced fraud detection, issuing more relevant card linked offers, or displaying line item information for each transaction in the banking or budgeting application.

Integrate your receipt level and taxonomy data with control and transparency using one of the many ingestion options that Banyan provides:

Batch Push

Our preference is to receive fresh data at least once per day, but we can work with whatever cadence makes sense for your business. Ensuring that there are no gaps in the data and that we receive all transactions is paramount. A common practice is to have a cutoff time for a day's transactions. For example, a daily file that gets uploaded at 11pm UTC on June 1, 2021 will have transactions through 5pm UTC (the cutoff time) on June 1, 2021. Subsequently, the June 2, 2021 file will contain all transactions from June 1st 5:00:01pm UTC - June 2nd 5:00pm UTC.

Our pipeline does not reject data based on date of transaction. If there are interruptions in your data feed to Banyan, simply send all missed data alongside your current day's data. It will be processed without issue.

Banyan's data lake is built on Google Cloud Platform using Google Cloud Storage. Each merchant will have a dedicated bucket that will be provisioned for read and write access using Google Service Accounts and Roles. If your data is already staged in a different environment such as Amazon S3, we will take care of porting it over to the Banyan GCS data lake.

Banyan Preferred Method: GCS

During data onboarding process we will provide you with a GCP service account, which will have the permissions necessary to push data into the bucket. The bucket contains a folder "input" in which batch files can be uploaded. If there are multiple different types of files, for example csv for each transactions and line items, there should either be a folder structure or naming scheme to differentiate them. Errors we encounter will be placed into an "errors" folder in the bucket, which is viewable by the provided service account. We'll work with you to resolve any encountered errors in the data.

Batch Pull

We can also pull data from you in batch, this can be done via FTP/SFTP, or direct GCS or S3 bucket access. Our preference is for data to be pushed to us given that a partner (you) will have greater visibility into when data is ready/complete.

REST API

We currently support sending one by one submissions of receipt data according to our schema using a POST call. During the onboarding process, please indicate that you would like to interact with the API and your account representative will grant you an access id and API key which will be used to retrieve your bearer token. All of the details about how to configure your headers and body can be found here

Preferred Data Formats

Parquet (compressed with snappy)

Avro

TSV/CSV (compressed with gzip/lzo)

JSON

Our preference is that files in these formats not exceed a size of 5GB uncompressed. If this is unacceptable or difficult please talk to your onboarding partner to determine the best resolution.

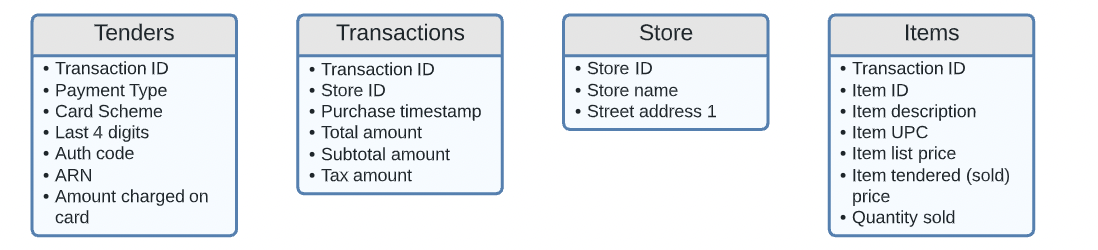

Batch Data Model Options

We understand that your purchase data may be dispersed among many warehouses or tables. We are happy to do the work of joining your data for you. If you already are sending this data to another partner and have it pre-joined, we will accept that as well.

Example Unflattened Data Layout

Sending Data History

After the QA phase of integration is over, we typically ask for a one time data dump of historical transaction, item and tender data to be sent to Banyan. We ask that this historical data mirror as close as possible to the ongoing data feed that we have just vetted in QA. Providing data to us in this way will guarantee a smoother onboarding and faster monetization of your data. As an example, if the standard feed is daily, please break out your historical transfer into daily files before uploading to the S3/GCS/SFTP.

Data Restatements

Either through API or Batch, Banyan can handle receiving the same transaction more than once. If new attributes are sent on the transaction, such as product details, those will be appended to the original receipt object created within the Banyan platform. However, there are some parts of the transaction that cannot be changed without Banyan creating a new receipt entity for the transaction. Those include:

- Purchase Timestamp

- Unique Transaction ID

Not creating extraneous receipt objects is important for extracting accurate insights from the Banyan platform.

What to Expect During the Setup Process

There are several steps to complete before programmatic data transfer can begin (est. 4-6 weeks):

- Review the Banyan Data Dictionary (represented in this document by the schemas below) and input corresponding fields readily available for delivery to Banyan.

- Review Merchant inputs to Banyan Data Dictionary and answer questions/comments added within

- Investigate updates required to data fields (if any)

- Setup delivery location for data

- Provide initial sample files for Banyan QA

- Conduct QA

- Resolve any known additions required or issues identified from Banyan QA

Ongoing Quality Control and Validations

Unless otherwise specified, data will be ingested into the Banyan platform as it is received. On both ingest methods (Batch and API) there will be validations on individual data fields. If the validation is not satisfied, the record will be rejected with a 400 error and detail of what was unable to processed.

| Field | Description of Validation |

|---|---|

| auth_code | Must be 6 characters |

| card_last_four | Must be 4 characters |

| zip_code | Must be 5 characters |

| arn | Must be 11 characters |

In addition, there will be runtime checks that aggregations make sense across the different types of data. For example,

FAQs

How long does onboarding typically take?

The entire process typically takes 2-3 weeks. The portion of the process that can lengthen this timeframe is the Data QA which may uncover work to be done by the merchant to pull in additional fields.

How should I represent split tenders?

The file should include n number of rows per transaction/item for n number of cards or distinct payment methods used. The tender type should show the difference if this is split between cash and card or if more than one card is used, the last4 should show which amount is associated with each card.

How should I represent returned items?

Returned items should have the same transaction id with a negative value for total amount to indicate that money was returned to the customer. A corresponding item(s) record also with a negative value for amount should also be sent to Banyan.

What if I don't have access to a required field within my data warehouse?

If one of our preferred, but not required fields is unavailable, no additional action needs to be taken. If it is one of our required fields we may need to work with you to figure out where in your processing pipeline we can extract the necessary information.

Should I include PII with transaction information?

No. Banyan does not accept any PII in its data feeds.

What do you consider to be PII?

Any information that can help identify the customer: customer email, customer address, customer phone number, and customer name are examples of PII that we will not accept. If you have questions on whether some of the information you're sending over is PII, please contact your Banyan representative with the question before sending your data.

Should I send you only credit card transactions or all transactions?

Send Banyan all transactions and if possible include what the tender type was with each transaction (including gift cards and/or purchases with loyalty points used).

What is an Acquirer Reference Number?

Acquirer Reference Number, similar to authorization code, is a unique numeric identifier that tags a credit or debit card transaction when it goes from the merchant’s bank (Acquirer) through to the cardholder's bank (Issuer). Also sometimes called a trace ID, this number is often used to determine where a transaction's funds lie at a certain time, and make a transaction traceable in case of an error in bank or merchant accounts. Note that this field is only available with some payment schemes (e.g. Visa and Mastercard).

What is Merchant Identification Number (MID) and where can I source it?

A merchant ID is a 15-digit code that is issued by your payment processors when you open up a merchant account. You can find your MID using the following methods:

- Check your merchant account statement

- Contact your merchant account provider

- Inspect your payment terminal

- Review your bank statement

Do I have multiple Merchant Identification Number (MID)?

The majority of businesses have one MID. However, if you own multiple unique businesses that have separate merchant accounts, then you probably have separate MIDs for each one.

Updated 17 days ago